Benchmarking the Qualcomm Neural Processing SDK for AI vs. TensorFlow on Android

Machine learning inference on edge devices

The Qualcomm® Neural Processing SDK for AI is designed for converting and executing deep neural networks on the Snapdragon® mobile platform without connecting to the cloud. The SDK is built for heterogeneous computing and running trained neural networks on different cores: CPU, Qualcomm® Adreno™ GPU and Qualcomm® Hexagon™ DSP.

Before investing development effort in moving those workloads out of the cloud and onto mobile devices, it is worthwhile to run performance benchmarks.

To set up the Qualcomm Neural Processing SDK, download it from the Tools & Resources page on Qualcomm Developer Network. The page includes system requirements and offers instructions for setting up the SDK, converting models and building the sample Android application in the SDK. (Note that Android NDK version r-11 is required for proper SDK setup.)

Quantizing a model

The SDK includes utilities for converting existing models to the Deep Learning Container (.dlc) format that the Qualcomm(R) Neural Processing Engine (NPE) runtime reads. The SDK also includes a utility, snpe-dlc-quantize, for quantizing (optimizing) trained models to reduce their size and improve efficiency.

Once the model is converted and optimized, devices running the Qualcomm NPE runtime can load the .dlc file. The Qualcomm NPE runtime processes the data and generates predictions.

Benchmarking against the TensorFlow framework

The following considerations apply to this benchmark exercise:

- Machine learning models — Inception-v3 averages about 80 percent confidence. It is a popular neural network in use today and suitable for developers learning about neural networks. AlexNet is trained on more than a million images and can classify into hundreds of object categories (e.g., keyboard, mouse, pencil and many animals).

- Processor core — Benchmarking takes place on both CPU and GPU. The latter core offers parallel processing and is better suited to detection in images.

- Framework — The benchmark compares the performance of models generated by the Qualcomm Neural Processing SDK against those generated by TensorFlow, an open source library made for developing and training ML models. (Refer to TensorFlow for instructions on setting up TensorFlow for Android.)

- Workload — The application under test performs object detection and prediction. It uses the models (Inception-v3 and AlexNet) to detect and process objects in the feed from the camera.

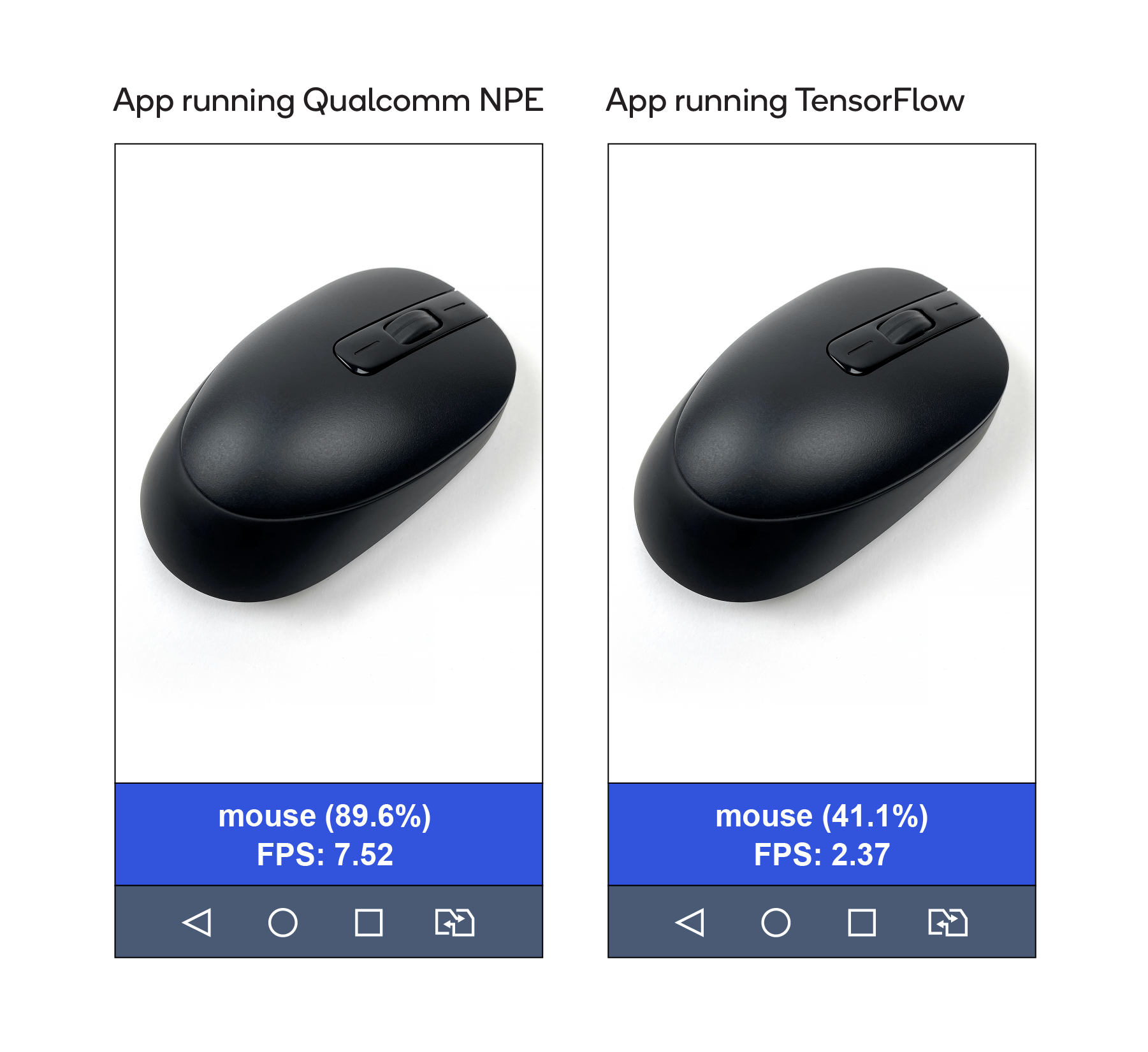

The screenshots below are taken from the applications. Both correctly identify the object as a mouse. Note, however, that the app running Qualcomm NPE indicates 89 percent confidence and 7.52 frames per second. The metrics are more than double those of the app running TensorFlow.

Results of benchmark testing

This type of benchmark testing can tentatively show the relative performance improvement achievable using optimization tools on various AI frameworks. The table below summarizes the main aspects of the testing, with performance using TensorFlow-Inception-v3 on CPU normalized to 1x.

| TensorFlow Inception-v3 | Qualcomm NPE Inception-v3 | Qualcomm NPE AlexNet | |

|---|---|---|---|

| Maximum performance with CPU | 1x | 1.5x | 3 - 3.5x |

| Maximum performance with GPU | 3x | 8x | 14x |

| Model format | .pb | .dlc | .dlc |

| Mobile processors supported | QTI (e.g., Snapdragon 8xx) and other (e.g., Exynos 7xxx) platforms | Only QTI (e.g., Snapdragon 8xx) platform | Only QTI (e.g., Snapdragon 8xx) platform |

| Processing also supported on DSP? | No | Yes | Yes |

| API language support | C++, Java, Python, NodeJS | C++, Java | C++, Java |

| Tool kit designed for: | Training and inferencing | Inferencing | Inferencing |

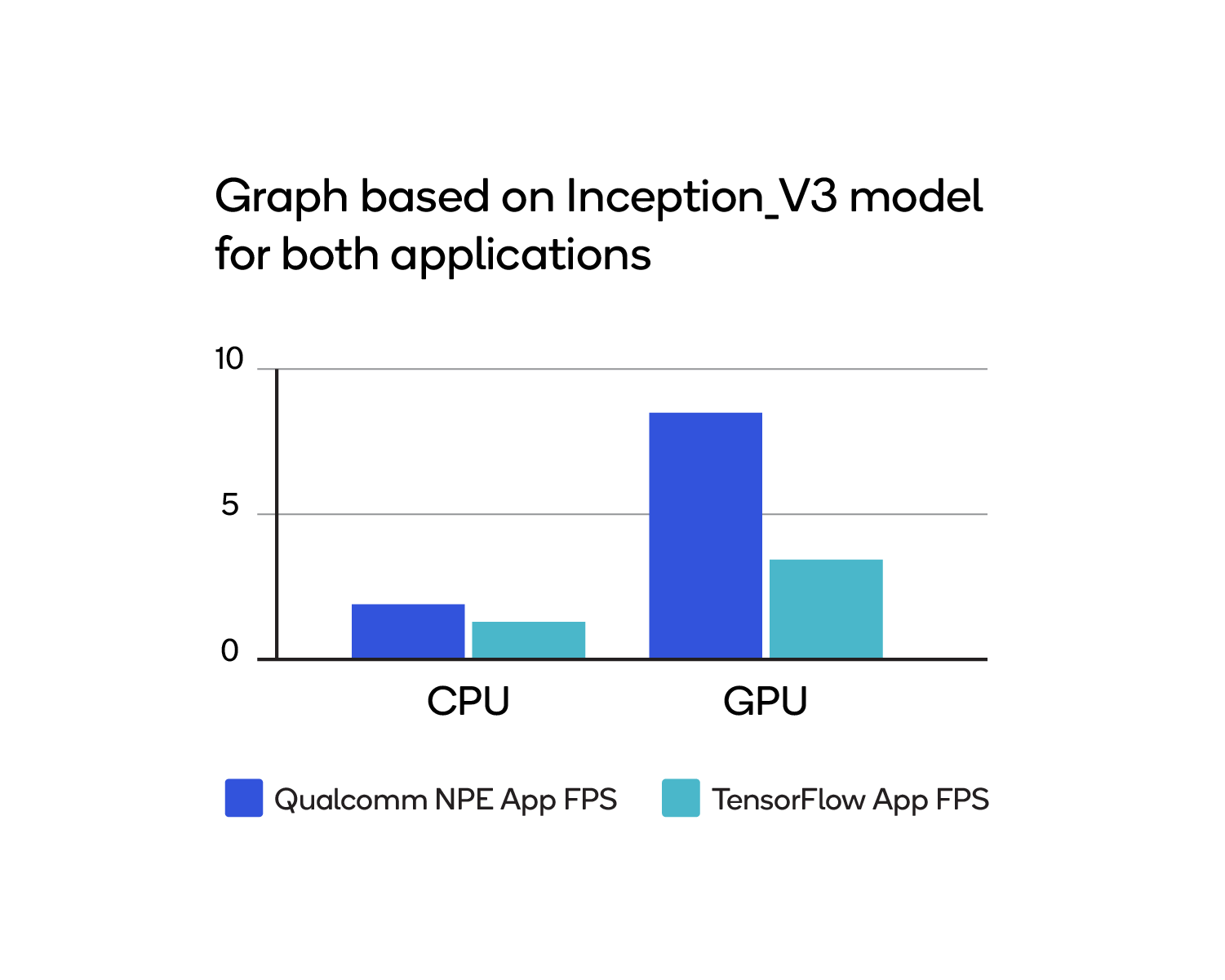

The following graph compares the orange and blue cells in the table:

Thus, when running inference with the Inception-v3 model, the application built on the Qualcomm Neural Processing SDK processes more frames per second.

Next step

The Qualcomm Developer Network is a source for other projects that use the Qualcomm Neural Processing SDK for AI. Developers can use the projects as prototypes in their own development efforts.

Qualcomm Neural Processing SDK, Snapdragon, Qualcomm Adreno, Qualcomm Hexagon and Qualcomm Neural Processing Engine are products of Qualcomm Technologies, Inc. and/or its subsidiaries.