Introduction

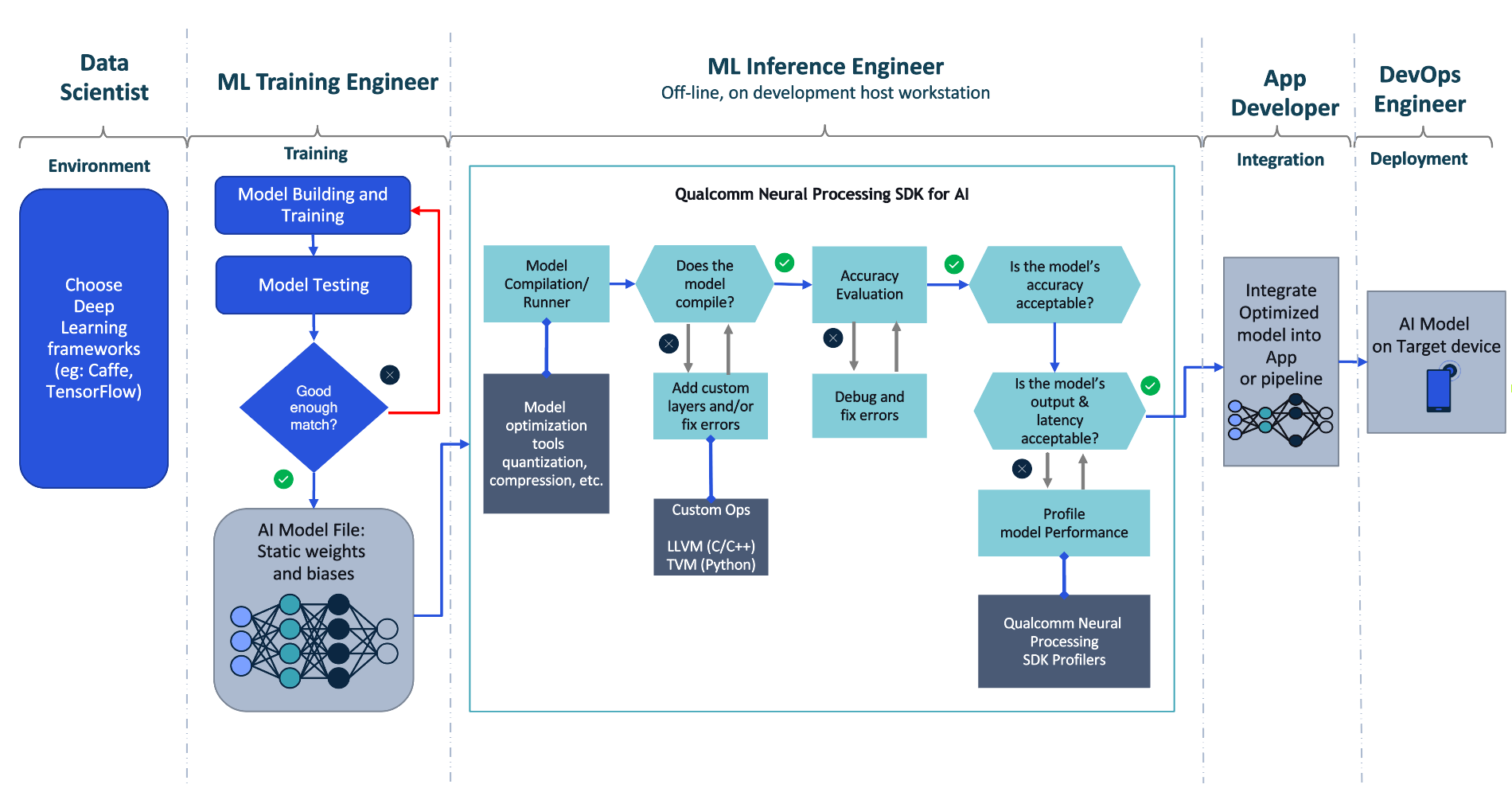

The Qualcomm® Neural Processing SDK allows developers to convert neural network models trained in ONNX or TensorFlow and run them optimally on Snapdragon® mobile platforms.

Below, we show you how to set up the Qualcomm Neural Processing SDK and use it to build your first working app.

Legal Disclaimer:Your use of this guide, including but not limited to the instructions, steps, and commands below, is subject to the Website Terms of Use and may contain references to third party or open source software that is not distributed by Qualcomm Technologies, Inc. or its affiliates. You are solely responsible for the installation, configuration, and use of any such third party or open source software and compliance with any applicable licenses.

System Requirements

The following dependencies are required to use the SDK:

Common Requirements

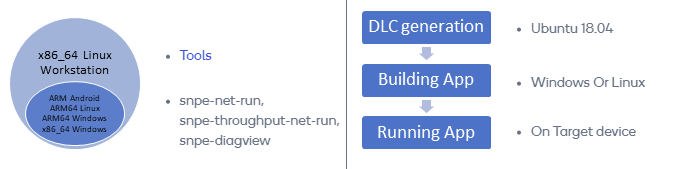

- x86_64 Linux Workstation for DLC preparation, generation, quantization

- Ubuntu 20.04 (native OS environment or WSL2 environment on Windows10/11)

- One of the following frameworks:

Qualcomm Neural Processing SDK Enabled Device Requirements

- Android device for running compiled model binary

- (Optional) Android Studio

- Android SDK (install via Android Studio or as stand-alone)

- Android NDK (android-ndk-r19c-linux-x86_64) (install via Android Studio SDK Manager or as stand-alone)

- Windows ARM64 device for building apps and running compiled model binary

- Windows 11

- Visual Studio 2019 16.6.0 and Desktop development with C++

- C++ CMake tools for Windows

- MSVC v142 C++ x86 build tools – 14.24

- MSVC v142 ARM64 build tools – 14.24

- Windows SDK 10.0.18362

| |

|

Set up the Qualcomm Neural Processing SDK

This step allows the SDK to work with the TensorFlow, ONNX, and TensorFlow Lite frameworks via Python APIs. Follow the steps below to set up the SDK on Ubuntu 20.04:

- Download the latest version of the SDK.

- Unpack the SDK’s .zip file to an appropriate location (e.g., ~/snpe-sdk).

- Install the SDK’s dependencies:

sudo apt-get install python3-dev python3-matplotlib python3-numpy python3-protobuf python3-scipy python3-skimage python3-sphinx wget zip - Verify that all dependencies are installed:

~/snpe-sdk/bin/dependencies.sh - Verify that the Python dependencies are installed:

source ~/snpe-sdk/bin/check_python_depends.sh - Initialize the Qualcomm Neural Processing SDK environment:

cd ~/snpe-sdk/

export ANDROID_NDK_ROOT=~/Android/Sdk/ndk-bundleNote: set ANDROID_NDK_ROOT to the location of your Android Studio installation.

- Set up your SDK’s environment based on your chosen ML framework:

Environment Setup for TensorFlow

Navigate to $SNPE_ROOT and run the following script to set up the SDK’s environment for TensorFlow:source bin/envsetup.sh -t $TENSORFLOW_DIRwhere $TENSORFLOW_DIR is the path to the TensorFlow installation.Environment Setup for ONNX

Navigate to $SNPE_ROOT and run the following script to set up the SDK’s environment for ONNX:source bin/envsetup.sh -o $ONNX_DIRwhere $ONNX_DIR is the path to the ONNX installation.Environment Setup for TensorFlow Lite

Navigate to $SNPE_ROOT and run the following script to set up the SDK’s environment for TensorFlow Lite:source bin/envsetup.shThe initialization will do the following:

- Update $SNPE_ROOT, $PATH, $LD_LIBRARY_PATH, $PYTHONPATH, $TENSORFLOW_DIR, or $ONNX_DIR

- Copy the Android NDK libgnustl_shared.so library locally

- Update the Android AAR archive

Download ML Models and Convert them to .dlc Format

The Qualcomm Neural Processing SDK does not bundle any model files but contains scripts to download some popular publicly-available models and convert them to Qualcomm Technoloiges’ proprietary Deep Learning Container (.dlc) format. Follow the steps below to use these scripts:

- Run the following commands to download and convert a pre-trained inception_v3 example in TensorFlow format:

cd $SNPE_ROOTTip: take a look at the setup_inceptionv3.py script, which also performs quantization on the model to reduce its size by almost 75% (91MB down to 23MB).

python ./models/inception_v3/scripts/setup_inceptionv3.py -a ./temp-assets-cache -d

Build the SDK’s Example Android App

The Qualcomm Neural Processing SDK includes an example Android app that combines the Snapdragon NPE runtime (provided by the /android/snpe-release.aar Android library) and the DLC model example described above.

Follow the steps below to build this example app:

- Run the following commands to prepare the app by copying the runtime and the model:

cd $SNPE_ROOT/examples/android/image-classifiers

cp ../../../android/snpe-release.aar ./app/libs # copies the NPE runtime library

bash ./setup_models.sh # packages the Alexnet example (DLC, labels, imputs) as an Android resource file - Choose one of the following options to build the APK:

Option A: Build the APK in Android Studio:- Launch Android Studio.

- Open the project in ~/snpe-sdk/examples/android/image-classifiers.

- Accept the Android Studio suggestions to upgrade the build system components, if offered.

- Click Run app button to build and run the APK.

Option B: Execute the following command to build the APK from the command line:

sudo apt-get install libc6:i386 libncurses5:i386 libstdc++6:i386 lib32z1 libbz2-1.0:i386

./gradlew assembleDebugNote: The command above will likely require ANDROID_HOME and JAVA_HOME to be set to the location of the Android SDK and the JRE/JDK in your system.

Build Windows App

This tutorial demonstrates how to build a C++ sample application that can execute neural network models on the Windows PC or Windows device.

Using CMake to Build SampleCode

- Unzip the SDK and open the location through Administrator Command Prompt

- Register System Environment Variable for the Qualcomm Neural Processing SDK

setx SNPE_SDK_ROOT %cd% /m && set SNPE_SDK_ROOT=%cd% && set SNPE_SDK_ROOT - Generate an executable file and SNPE.dll

cd %SNPE_SDK_ROOT%/examples/NativeCpp/SampleCode_Windows

mkdir build & cd build

cmake ../ -G"Visual Studio 16 2019" -A ARM64choose one platform x64, ARM64 to run on

cmake --build ./ --config Releaseor open the generated .sln in Visual Studio and build

Run a network

- Copy to WoS the snpe-sample.exe, SNPE.dll and sample resources (inception_v3.dlc, target_raw_list.txt, and 4 raw files generated by the above setup_inceptionv3.py script)

Note: Please match target_raw_list.txt raw relative path to the copied execution location

e.g.) If raw files are placed in the same execution location, target_raw_list.txt should be written as below

trash_bin.raw

notice_sign.raw

chairs.raw

plastic_cup.raw - Copy dsp shared object, dynamic link library files to the same folder for dsp runtime

SC8180X: %SNPE_SDK_ROOT%/lib/dsp/libSnpeDspV66Skel.soNote: quantize and add DSP/HTP graph to the dlc in the Ubuntu 20.04 workstation for runtime dsp

SC8280X: %SNPE_SDK_ROOT%/lib/dsp/libSnpeHtpV68Skel.so

SC8180X: %SNPE_SDK_ROOT%/lib/aarch64-windows-vc19/SnpeDspV66Stub.dll

SC8280X: %SNPE_SDK_ROOT%/lib/aarch64-windows-vc19/SnpeHtpV68Stub.dllsnpe-dlc-quant --input_dlc inception_v3.dlc --output_dlc inception_v3_quantized.dlc --input_list target_raw_list.txtNote: supported chipsets from Qualcomm® Kryo™ CPU, Snapdragon 8c

snpe-dlc-graph-prepare --input_dlc inception_v3_quantized.dlc --output_dlc inception_v3_quantized_cached.dlc --input_list target_raw_list.txt - Run the executable with a network in the Command Prompt. The output tensors are dumped to the ‘output’ folder as raw binary files.

snpe-sample.exe --container inception_v3_quantized_cached.dlc --input_list target_raw_list.txt --output_dir output --runtime dspBatch size for the container is 1

Processing DNN Input: trash_bin.raw

Processing DNN Input: notice_sign.raw

Processing DNN Input: chairs.raw

Processing DNN Input: plastic_cup.raw

Snapdragon and Qualcomm Neural Processing SDK are products of Qualcomm Technologies, Inc. and/or its subsidiaries.