Solutions Resources

Description of Dataset

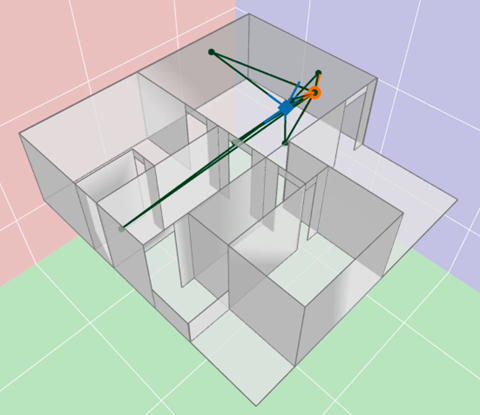

The Wireless Indoor Simulations dataset contains a large set of channels to enable a better understanding of the interplay between the propagation environment (e.g., materials, geometry) and corresponding channel effects (e.g., delay, receive power).

The dataset is in two...

“It’s a good model,” you say, thinking about the model you’ve trained in the cloud for your machine learning application. “I just wish we could fine-tune it on the user’s device.”

Now you can do just that.

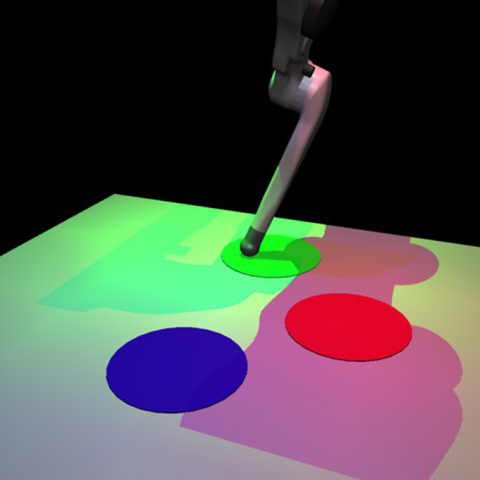

CausalCircuit is a dataset designed to guide research into causal representation learning – the problem of identifying the high-level causal variables in an image together with the causal structure between them.

The dataset consists of images that show a robotic arm interacting with a system of...

What do on-demand language translation, self-driving cars, and video calls have in common? They’re all examples of today’s ubiquitous technology that was once the figment of science fiction (sci-fi).

You like running your machine learning (ML) workloads with the Qualcomm Adreno OpenCL ML SDK on Adreno GPUs.

The international embedded computing community marked its 20th anniversary with this year’s Embedded World conference in Nuremberg.

When you look at your robotics application, do you think of it as an “intelligent-edge use case?” Probably not, but that’s where it plays.

In many industrial settings, determining the current health of assets still involves a technician putting their ear to a machine to detect any ominous sound deviations.

Today at Microsoft Build 2022, Qualcomm Technologies is announcing that we are opening up our industry-leading Qualcomm AI Engine for Windows developers with the Qualcomm Neural Processing SDK for Windows.

Hands up if you were on a video call this week? And keep your hands up if you got distracted by someone on the call typing, their dog barking, their kids playing, or other background noise. You were not alone.

You can get a lot of innovation out of running machine learning inference on mobile devices, but what if you could also train your models on mobile devices? What would you invent if you could fine-tune your models at the network edge?

Were you able to attend this year’s AWS re:Invent from November 29 – December 3?

Qualcomm AI Research showcases its cutting-edge advancements in machine learning

Cooking with Snapdragon is a video on which we recently collaborated with WIRED Brand Lab, and published on

The 2021 ARM DevSummit was held from October 19 to 21, 2021.

The Qualcomm AI Developer Conference was held in September 2021 in Chengdu, China, and the theme this year was presenting a new era of the intelligent interconnection of technologies. Heng Xu, Sr.

Data is at the heart of modern business, providing real-time insight and control over day-to-day operations. This is facilitated by the explosion of the Internet of Things (IoT), with billions of devices collecting zettabytes of data . As IoT grows, so does the amount of data to be processed....

To run neural networks efficiently at the edge on mobile, IoT, and other embedded devices, developers strive to optimize their machine learning (ML) models' size and complexity while taking advantage of hardware acceleration for inference.